Large language models (LLMs) are revolutionizing the landscape of artificial intelligence. These sophisticated algorithms, trained on massive datasets of text and code, possess the remarkable ability to understand and generate human-like language with impressive accuracy. From powering chatbots that engage in natural conversations to generating creative content such as poems and articles, LLMs are demonstrating their versatility across a wide range of applications. As these models continue to evolve, they hold immense potential for transforming industries, automating tasks, and even augmenting human capabilities. The check here ethical implications of such powerful technology must be carefully considered, ensuring responsible development and deployment that benefits society as a whole.

Delving into the Power of Major Models

Major architectures are revolutionizing the field of artificial intelligence. These powerful algorithms are trained on vast information repositories, enabling them to perform a extensive range of tasks. From generating human-quality text to interpreting complex images, major models are advancing the boundaries of what is possible. Their effects is observable across fields, transforming the way we live with technology.

The ability of major models is infinite. As innovation continues to evolve, we can anticipate even more transformative applications in the horizon.

Major Models: A Deep Dive into Architectural Innovations

The landscape of artificial intelligence is a dynamic and ever-evolving terrain. Major models, the heavyweights driving this revolution, are characterized by their immense scale and architectural complexity. These groundbreaking structures frameworks have transformed various domains, spanning natural language processing to computer vision.

- One prominent architectural paradigm is the transformer network, renowned for its capacity in capturing long-range dependencies within structured data. This design has advanced breakthroughs in machine translation, text summarization, and question answering.

- Another fascinating development is the emergence of generative models, skilled of creating unprecedented content such as images. These models, often based on deep learning techniques, hold vast potential for applications in art, design, and entertainment.

The continuous exploration into novel architectures fuels the advancement of AI. As researchers push the boundaries of what's possible, we can foresee even more breakthroughs in the years to come.

Major Models: Ethical Considerations and Societal Impact

The rapid advancements in artificial intelligence, particularly within the realm of major models, present a multifaceted landscape of ethical considerations and societal impacts. Utilization of these powerful algorithms necessitates careful scrutiny to mitigate potential biases, guarantee fairness, and safeguard individual privacy. Concerns regarding job displacement as a result of AI-powered automation are exacerbated, requiring proactive measures to upskill the workforce. Moreover, the potential for propaganda through deepfakes and other synthetic media presents a serious threat to credibility in information sources. Addressing these challenges requires a collaborative effort involving researchers, policymakers, industry leaders, and the public at large.

- Accountability

- Algorithmic justice

- Privacy protection

The Rise of Major Models: Applications Across Industries

The field of artificial intelligence is experiencing a rapid growth, fueled by the development of powerful major models. These models, trained on massive pools of information, possess the potential to disrupt various industries. In healthcare, major models are being used for treatment planning. Finance is also seeing implementations of these models for fraud detection. The manufacturing sector benefits from major models in process optimization. As research and development advance, we can expect even more groundbreaking applications of major models across a extensive range of industries.

Fine-Tuning Large Language Models: Benchmarks and Best Practices

Training and evaluating major models is a demanding task that demands careful consideration of numerous parameters. Successful training hinges on a combination of best practices, including optimal dataset selection, configuration fine-tuning, and in-depth evaluation benchmarks.

Furthermore, the scale of major models poses unique challenges, such as computational costs and potential biases. Researchers are continually developing new techniques to mitigate these challenges and advance the field of large-scale model training.

- Best practices

- Network designs

- Evaluation metrics

Mara Wilson Then & Now!

Mara Wilson Then & Now! Sydney Simpson Then & Now!

Sydney Simpson Then & Now! Danielle Fishel Then & Now!

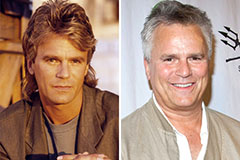

Danielle Fishel Then & Now! Richard Dean Anderson Then & Now!

Richard Dean Anderson Then & Now! McKayla Maroney Then & Now!

McKayla Maroney Then & Now!